Google Analytics is far and away the most widely used website analytics tool on the planet, installed on an estimated 54 percent of all websites, including two-thirds of Fortune 500 company sites.

Google Analytics is far and away the most widely used website analytics tool on the planet, installed on an estimated 54 percent of all websites, including two-thirds of Fortune 500 company sites.

Its appeal goes beyond cost (though “free” is tough to beat). Google Analytics (hereafter, GA) is a powerful analytics package with extensive capabilities for analyzing website traffic and user behavior.

Despite its power and popularity, however, the tool does have some significant shortcomings. Relying on GA’s default settings and values will give you a tremendously distorted view of what’s really happening on your website.

For example, one of the most basic, high-level metrics is overall website visits (“sessions” in GA parlance). How much traffic did my website get last month (or week, day, year, etc.)?

Without any filtering, GA will give you a number far higher than reality. That’s because GA doesn’t automatically filter out “bot” traffic, which now accounts for more than half of all web traffic.

These bots are generally innocuous but may be hitting your site on behalf of competitors trying to scrape your pricing data, government agencies checking up on you, or hackers looking for vulnerabilities. What they are not is human visitors actually interested in your products or services.

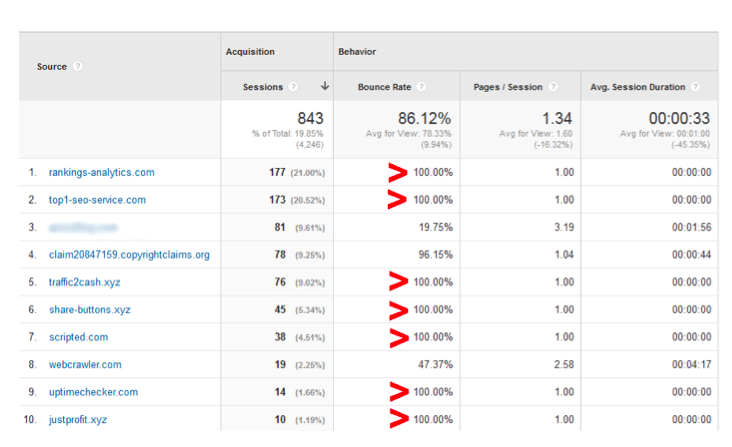

Unfiltered results are misleading in other ways as well. Visits from bots are often single-page, of very short duration, and with a 100 percent bounce rate. So the default traffic values reported by GA will not only inflate your sessions, visits, and bounce rate, but also under-report visitor engagement metrics like average pageviews per visit and average time spent per visit.

Fortunately, it’s relatively easy to filter out bot traffic and address other shortcomings of GA using the tools “segment” capability. Here are four tips for getting better data out of GA.

Eliminate Bot Traffic Stats

While there are elaborate methods that claim to eliminate all bot traffic from GA results, setting up a simple segment is a quick way to eliminate most of this misleading noise from your results.

Let’s start with a sample website: 4,245 sessions over a recent period, according to GA’s default, unfiltered “All Sessions” segment. That seems a bit high, so bot traffic is suspected.

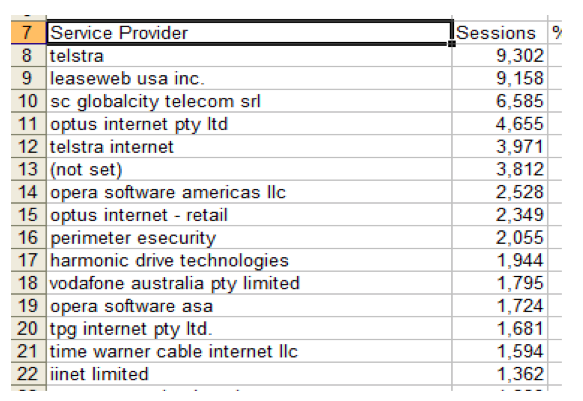

Two sources will help identify most of the bots. The first is the Audience > Technology > Network view. This view displays the various networks (“Service Providers” in GA’s term) sending traffic to your website. Bot visits are easy to spot because they typically hit only one page, have a zero or one-second visit duration, and have a 100 percent bounce rate. Looking at the top Service Providers for our sample site, several of these stand out right away:

Make a note of these, or export these results and grab the Service Provider names.

The second view to check is Acquisition > All Traffic > Referrals. This will display other websites referring traffic to your site. Again, we’re looking for single-page, very short duration, 100 percent bounce rate visit sources—and again, there are several among the top results:

Note these or export the results to grab the offending sources.

Now it’s time to get rid of this nonsense. Near the top-center of the page, click on “+Add Segment.” Next, click the red “”NEW SEGMENT” button.

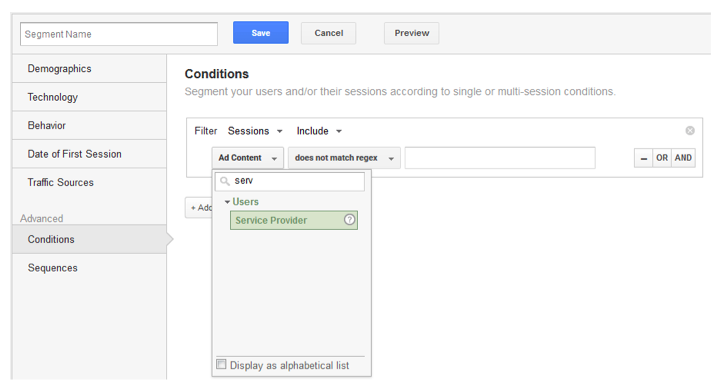

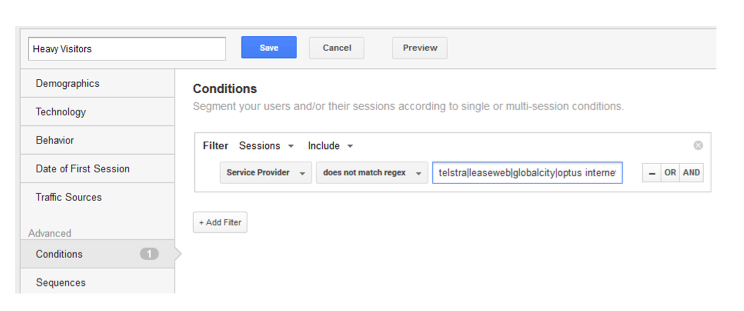

Select “Conditions” in the left menu. Use the search feature to change the Sessions value from the default (“Ad Content”) to “Service Provider.” Then change the Include Operator to “does not match regex”:

In the box to the right of the Include operator, type in the names of the Service Providers to exclude from our list, separated by the pipe (|) character, like this:

krypt|global frag|hubspot|quadranet|linode

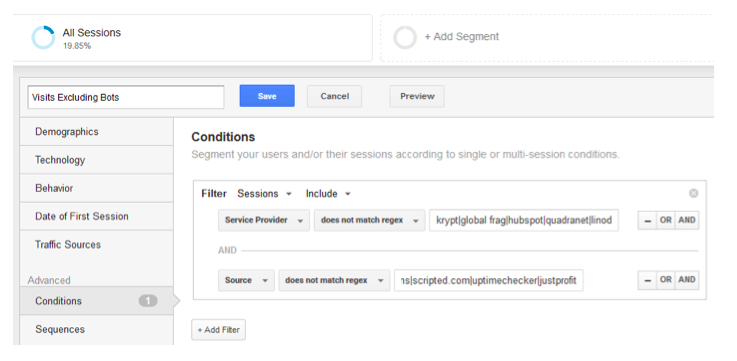

Click the “And” boolean operator on the right, and repeat the process above for “Source” under Sessions to exclude bogus referral sites:

Give the new segment a name (such as “Visits Excluding Bots”) then click the blue “Save” button.

Finally, near the top-center of the page, click just off to the right of the “+Add Segment” box, to bring up a list of available segments. Leave “All Sessions” checked, and also check your new segment, then click “Apply.” Navigate to the Audience > Overview view.

Comparing the two segments reveals that our sample site was getting almost 38 percent of all traffic from bots(!). The “Sessions” number is of course lower with the bot traffic excluded, but it now represents actual human visits to the site. All engagement metrics (average pageviews per visit, average visit duration, bounce rate) look better with the bot traffic filtered out:

Create a “Real” Organic Search Segment

How much traffic does your site get from organic search? No, really? GA includes a pre-built “Organic Search” segment—but it’s not accurate.

To get an accurate count of search visits, you’ll need to create another custom segment. Start with the process detailed above, setting the Sessions value to “Source” and the Include value to “matches regex.” Enter the obvious search engines, separated by pipe characters:

google|bing|yahoo|aol

Click the “And” boolean operator. Set Sessions to “Medium”, Include to “does not contain,” and type “cpc” in the box. This will exclude paid search traffic from the segment.

Give the segment a name (e.g., “Real Organic Visits”) then click Save.

Now for the legwork: GA mis-identifies many of the smaller search engines as referral sources. The result is that unfiltered GA results will overstate your referral traffic and under-report visits from organic search.

Navigate to the Acquisition > All Traffic > Referrals view to display a list of all referral sites sending you traffic. The bad news is, if your site gets a significant amount of traffic, it will likely take some time and effort to identify all the search engines in your referral list. The good news is, you’ll only need to do this once—then periodically double-check it to keep it updated.

Look through your referral list carefully to find search engines. Your list will be unique to your site, but search engines commonly misidentified by GA as referring sites include:

ask.com

webcrawler

duckduckgo

sites containing the word “search” (e.g., search.xfinity.com, mysearch.com)

wow.com

info.com

baidu

dogpile

centurylink.net

yandex

blackle

findamo.com

finded.co

Once you have your list compiled, again, near the top-center of the page, click just off to the right of the “+Add Segment” box, to bring up a list of available segments. Select your new organic search segment, then click “Edit” under “Actions” on the right:

Add all of your new search engines to your search engine list, separated by pipe characters. Click “Save” when finished.

Voila! You’ve got a new segment that will show how much traffic your site actually gets from search. The difference from GA’s default organic search segment may be small, but in some cases can be close to 10 percent.

Create a “Real” Social Media Traffic Segment

As with organic search, GA provides an easy way to view sessions driven from social media sites. And as with organic search, it’s not terribly accurate.

Setting up this segment works just like setting up a segment to display actual search traffic as described above, except this time including social networks rather than search engines. This segment also requires some judgment: Should referral traffic from blogs be counted as social traffic? Some site owners and marketers say “yes,” some say “no.”

To build your list for this segment, comb through your list of referring sites to identify all of the social networks. In addition to the obvious (Twitter, Facebook, YouTube, plus.google.com, LinkedIn), look for sites like:

lnkd.in

t.co (Twitter’s URL shortener)

scoop.it

pinterest

alltop.com (optional)

stumbleupon

blogs (optional)

flipboard

getpocket

digg

hootsuite

paper.li

tumblr

medium

feedly

xing.com

Identify Frequent and Heavy Visitors

GA provides a helpful report to identify the organizations (businesses, non-profits, government agencies) most frequently visiting your site. The problem (as with overall traffic) is that the list includes every network sending traffic your way: Businesses and government agencies of course, but also ISPs (Verizon, AT&T, etc.), spam referrers, hackers using proxies, dodgy foreign networks, and more.

The trick again is to create a segment that filters out all the nonsense so you can focus on the real heavy visitors to your site.

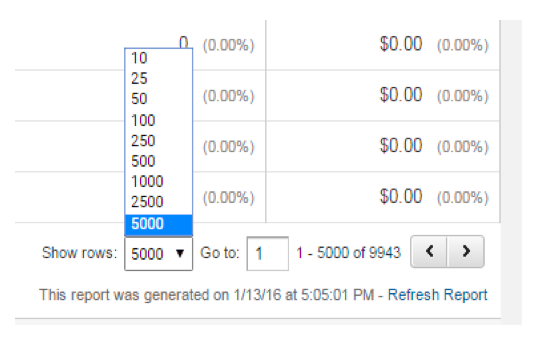

Start by navigating to the Audience > Technology > Network view. At the bottom-right of the screen, set the “Show rows” value to a large enough value to display all of your top visiting networks.

BONUS TIP: There’s no need to show all of the referring networks as this filter is designed to show only the most frequent and heaviest visitors. But what if you do (for some reason) want to see all of the networks referring traffic—and you have more than 5,000, the max GA will let you select?

At the top-right of your browser window, look at the last few characters in your browser’s address bar. If you need a number larger than 5,000, type it in here and GA will display a larger number of rows.

*END BONUS TIP*

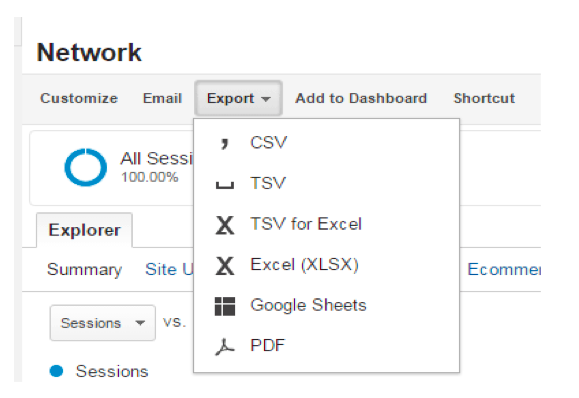

At the top of the GA page, export your table of referring networks to an Excel or CSV file:

Use the Excel file to start building your “does not match regex” list to exclude ISPs and other no-value sites in your filter:

As with the real organic search filter, the initial setup of this filter will take some time and effort—but thereafter, it’s just a matter of periodically updating it as new excludable sites are identified.

Set up a new segment selecting “Service Provider” under Sessions and, again, “does not match regex” under Include. Copy and paste in the list of sites to exclude based on the Excel file downloaded:

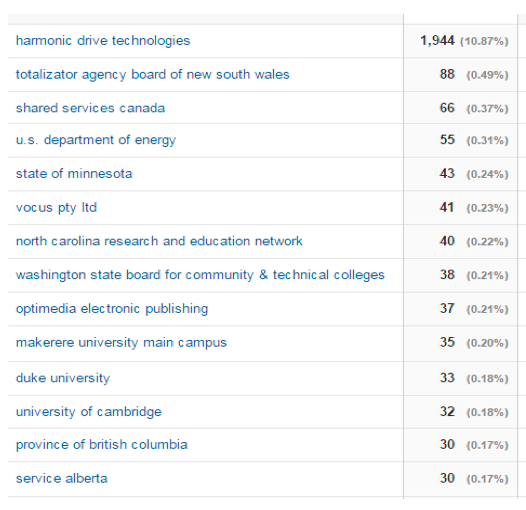

With a bit of tweaking, you’ll end up with a clean report showing only your actual top-visiting businesses and other organizations:

For analyzing website traffic, Google Analytics is a powerful and popular—but not perfect—tool. Fortunately, with a bit of creativity and knowledge of how segments work in the tool, you can create reports and dashboard views that give you a true picture of what’s happening on your site.

What about you? Are you a Google analytics fan? Have you done this in-depth segmenting before? Had good results? Or bad? I would love to hear about your experiences with GA.

Photo Credit: bluefountainmedia via Compfight cc